Introduction

We ran a test at Visbee a while back. We asked ChatGPT the same question, “What’s the best CRM for small businesses?”, fifty times over the course of a week. Same question. Same platform. Different sessions each time.

Salesforce appeared in every single response. HubSpot in 48 out of 50. Zoho CRM in about 30. Pipedrive in maybe 15. Freshsales showed up twice. And plenty of CRMs that are genuinely good products, used by thousands of companies, didn’t appear once.

The results weren’t random. They were remarkably consistent. And when we dug into why certain brands showed up and others didn’t, we found patterns that most companies aren’t even thinking about yet.

This article is about those patterns. If you’ve ever wondered what’s actually going on when ChatGPT picks one brand over another, this is for you.

It’s not random, but it’s not a ranking either

The first thing to understand is that ChatGPT doesn’t have a list of “top brands” stored somewhere. It doesn’t rank brands the way Google ranks websites. There’s no equivalent of a PageRank score or a domain authority number.

What ChatGPT does is generate text based on probability. When it’s writing a response to “What’s the best CRM?”, it’s essentially predicting which words should come next, based on everything it was trained on and (increasingly) what it finds through real-time web search.

So when ChatGPT recommends Salesforce, it’s not because Salesforce paid for placement or because some algorithm scored them highest. It’s because the statistical weight of Salesforce in the AI’s knowledge base, across millions of documents, is enormous. The model has seen the word “Salesforce” in the context of “best CRM” so many times that it’s the most natural next word to generate.

This sounds abstract, but the practical implications are concrete. If you want your brand to show up, you need to increase its statistical presence in the places that feed into AI training and real-time search.

The two knowledge sources

ChatGPT draws from two distinct sources when generating a response, and understanding the difference matters.

Source 1: Training data (the long-term memory)

ChatGPT’s base knowledge comes from the massive dataset it was trained on. Think of this as its long-term memory. This data was collected from the internet before a specific cutoff date, and it includes:

- Millions of web pages, blog posts, and documentation sites

- Reddit discussions and forum threads (these carry surprisingly heavy weight)

- News articles and press coverage

- Wikipedia and reference sites

- Academic papers

- Product review sites and comparisons

The key thing about training data: it’s frozen. Once the model is trained, that knowledge doesn’t change until the next training run. If your brand wasn’t prominent in the training data, you’re starting from a disadvantage that content published today won’t immediately fix.

We noticed this clearly when testing newer products at Visbee. Brands that launched in 2025 had significantly worse AI visibility than established competitors, even when the newer products had better reviews and more features. The AI simply hadn’t been trained on enough data about them.

Source 2: Real-time search (the fresh context)

Modern ChatGPT (and most other AI assistants) can search the web in real time to supplement their training data. This is where things get more interesting and more actionable.

When ChatGPT performs a web search as part of generating a response, it typically:

- Sends a search query to the web (similar to a Google search)

- Retrieves and reads several top-ranking pages

- Extracts relevant information

- Blends it with its existing knowledge to form the response

This means your traditional SEO work has a direct line to your AI visibility. Pages that rank well in web search are more likely to be retrieved and cited by ChatGPT.

But there’s a nuance. ChatGPT doesn’t always search the web. For well-established topics where the training data is sufficient, it might skip the search entirely and answer from memory. This is why training data presence remains important even as real-time search becomes more common.

What actually makes a brand show up

After running thousands of queries across different industries, we’ve identified the factors that seem to matter most. Some were expected. Some surprised us.

Factor 1: Sheer volume of online presence

This is the most straightforward one. Brands that are mentioned frequently across many different types of sources are more likely to appear in AI responses. It’s not about any single article or review. It’s about the cumulative weight of your brand across the internet.

Salesforce dominates CRM recommendations partly because you can’t read about CRMs anywhere without encountering Salesforce. It’s in the comparison articles, the G2 reviews, the Reddit threads, the industry reports, the tech news, the conference recaps. That accumulated presence creates statistical gravity that pulls the AI toward mentioning it.

Factor 2: Context of mentions

Volume alone isn’t enough. The context matters.

We tested this by comparing brands that had similar volumes of online mentions but very different AI visibility. The difference usually came down to how they were mentioned.

Brands that appeared in “best of” lists, comparison articles, and recommendation-style content had much higher AI visibility than brands that were mostly mentioned in their own press releases or company blog posts. The AI seems to weight third-party, recommendation-oriented content more heavily than first-party marketing content.

Makes sense if you think about it. When you ask ChatGPT “What’s the best X?”, it’s looking for content that already answers that question. If the internet is full of articles saying “Brand Y is one of the best tools for X”, the AI has ready-made material to draw from.

Factor 3: Sentiment

This one surprised us a bit. We expected AI to be neutral and just report facts. But ChatGPT clearly factors in sentiment when making recommendations.

Brands with overwhelmingly positive mentions (high review scores, positive Reddit discussions, favourable press coverage) get recommended more confidently. ChatGPT will say things like “Brand X is widely regarded as…” or “Brand X is a popular choice because…”

Brands with mixed or negative sentiment get more cautious treatment. ChatGPT might say “Brand X is an option, though some users have reported issues with…” or skip the brand entirely.

We’ve tracked this in Visbee and the correlation between online sentiment and AI recommendation confidence is strong. If you have a reputation problem online, it will show up in your AI visibility.

Factor 4: Specificity of positioning

Brands with clear, specific positioning tend to perform better in AI responses than brands that try to be everything to everyone.

A concrete example: when we asked “What’s the best project management tool for software development teams?”, Jira appeared almost every time. When we asked “What’s the best project management tool for marketing teams?”, Asana and Monday.com dominated. Both Jira and Asana are general-purpose tools, but their online positioning is specific enough that AI associates them with different use cases.

If your brand’s positioning is vague (“we help businesses grow” or “the all-in-one platform”), the AI doesn’t have a strong signal for when to recommend you. Niche brands with clear messaging often outperform larger competitors in their specific domain.

Factor 5: Recency and freshness

For queries where real-time search kicks in, recent content carries more weight. A brand that published a relevant, high-quality article last month will have an edge over one whose last blog post was two years ago.

This is one area where smaller brands can compete. You don’t need a massive backlog of content. You need a consistent stream of relevant, current material.

Factor 6: Source authority

Not all mentions are created equal. A mention in TechCrunch, Forbes, or an established industry publication carries more weight than a mention on a random blog. Similarly, a highly upvoted Reddit answer or a well-cited G2 review matters more than a generic directory listing.

The AI, like a human researcher, implicitly weights sources by their perceived authority.

What we learned from our own testing

Running thousands of queries through Visbee gave us some observations that aren’t obvious from the outside:

AI responses are more consistent than you’d expect. We assumed there would be a lot of randomness. There isn’t. For a given query, the same brands tend to appear in roughly the same order, session after session. Minor variations happen, but the core recommendations are stable.

Different AI platforms recommend different brands. ChatGPT, Claude, and Perplexity often agree on the top 1-2 brands, but diverge on the rest. This is because they were trained on different datasets and use different retrieval approaches. Monitoring just one platform gives you an incomplete picture.

Category leaders have a massive advantage. The “rich get richer” effect is real. Once a brand is established as a top recommendation, the AI’s confidence in recommending it grows, which generates more third-party content mentioning it, which further reinforces its position. Breaking into this cycle is hard but not impossible.

Negative content has outsized impact. A single viral negative article or a widely-shared critical Reddit thread can move the needle more than dozens of positive mentions. This is because negative content often gets framed as a “warning” or “thing to watch out for”, which directly contradicts recommendation-style queries.

[Screenshot: Visbee sentiment analysis showing how positive vs negative mentions affect visibility score]

Common misconceptions

A few things that people often get wrong about how AI recommends brands.

”I can pay to get recommended”

You can’t. Unlike Google Ads, there’s no way to buy your way into ChatGPT’s responses. Some SEO agencies are starting to offer “AI optimization” services that promise this, and honestly, be sceptical. There’s no shortcut. The factors that drive AI recommendations are the same ones that drive genuine online authority: real content, real reputation, real mentions.

”My website SEO is enough”

It helps, but it’s not sufficient on its own. AI draws from many sources beyond web search results. Reddit discussions, forum posts, review sites, YouTube video descriptions, podcast transcripts. Your website is one piece of a much larger puzzle.

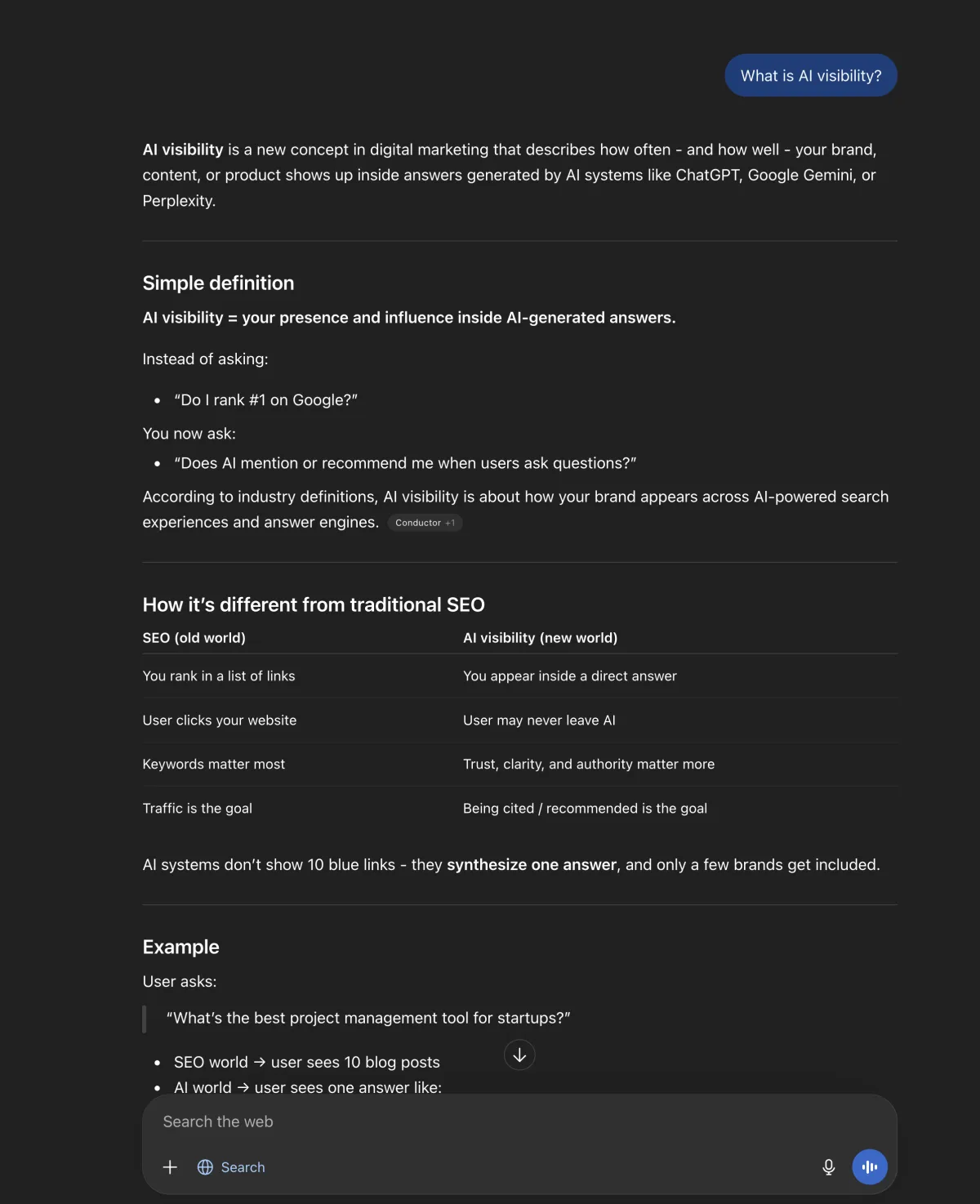

”It’s the same as traditional SEO”

There’s overlap, but the mechanics are different. Traditional SEO optimises for Google’s ranking algorithm, which heavily weights backlinks, keyword usage, and technical factors. AI visibility is shaped by a broader set of signals, and the output is completely different: instead of a ranked list of links, you get a conversational response that might mention your brand, your competitor, both, or neither.

”Only big brands can win”

Not true. We’ve seen niche B2B tools outperform household names in their specific domain. If you’re the most authoritative voice on a narrow topic, AI will notice. The bar for smaller brands is actually lower than in traditional SEO, where domain authority gives large sites a structural advantage.

What you can do about it

We covered this in more detail in our article about what AI visibility is and why it matters, but here’s a focused summary for the ChatGPT context specifically:

-

Get mentioned in third-party recommendation content. Comparison articles, “best of” lists, and review roundups are what ChatGPT draws from most heavily. Pursue guest posts, product reviews, and inclusion in industry comparisons.

-

Be active on Reddit and community forums. Reddit is disproportionately influential in AI training data. Authentic, helpful participation in relevant subreddits (not self-promotion) builds the kind of mentions that AI picks up on.

-

Keep your content fresh. Regularly publish and update content in your domain. This matters for real-time search retrieval.

-

Sharpen your positioning. The clearer your brand’s positioning, the easier it is for AI to know when to recommend you. “The visibility monitoring tool for AI search” is better than “the all-in-one marketing platform.”

-

Monitor the results. Use a tool like Visbee to track what ChatGPT actually says about your brand, how often, and in what context. Without measurement, you’re flying blind.

Summary

ChatGPT’s brand recommendations aren’t random, but they’re also not a simple ranking system. They emerge from the statistical weight of your brand across training data and real-time search results.

The brands that get recommended share common traits: they’re mentioned frequently across diverse, authoritative sources, they have clear positioning, they maintain positive sentiment, and they keep publishing relevant content.

The good news is that this is all influenceable. You can’t game the system, but you can build the kind of genuine online presence that earns AI recommendations. And with tools like Visbee, you can actually see whether it’s working.

If you want to know where you stand right now, start with a simple test. Ask ChatGPT about your product category and see what comes back. Then ask about your specific brand. The gap between those two answers will tell you a lot about where to focus.

FAQ

Does ChatGPT always recommend the same brands?

Mostly, yes. For well-established categories, the top recommendations are remarkably consistent across sessions. Minor variations happen (the order might shuffle, or a 4th/5th brand might change), but the core list is stable. We’ve confirmed this by running the same queries hundreds of times through Visbee.

Can I get ChatGPT to stop saying negative things about my brand?

You can’t directly edit what ChatGPT says. But you can address the underlying sources. If the negative mentions come from unresolved reviews or press coverage, fixing those issues over time will shift the AI’s output. It’s not instant, but it works.

How long does it take for new content to influence ChatGPT’s recommendations?

For real-time search: almost immediately, if your content ranks well. For training data: months, since it depends on when OpenAI does their next training run. This is why a combined strategy (strong SEO for real-time retrieval + building long-term authority for future training runs) works best.

Is Perplexity different from ChatGPT in how it recommends brands?

Yes. Perplexity is more search-heavy and less reliant on training data. It also cites its sources explicitly. In our testing, brands with strong web content tend to perform relatively better on Perplexity than on ChatGPT, while established brands with deep training data presence do relatively better on ChatGPT.

How is this different from what we covered in the AI visibility article?

The AI visibility article covers the broad concept and why it matters. This article goes deeper into the specific mechanics of how ChatGPT (and similar models) decide which brands to include. Think of it as the “how” behind the “what.”